Project Overview - Example: F1 Data Pipeline

A end-to-end data engineering pipeline that ingests real-time Formula 1 telemetry and event data from the OpenF1 API, stores it in object storage, loads it into a cloud data warehouse, and transforms it into analytics-ready models for reporting and analysis.

Overview

This project demonstrates a production-style data pipeline built around an F1 dataset. It covers the full data engineering stack: orchestration, ingestion, raw archiving, warehouse loading, data modelling, and analytics — all running locally via Docker with BigQuery as the managed analytical store. It also demonstrates best practices in pipeline reliability, featuring custom error handling, automated retries, and dependency management to showcase a production-grade environment.

Key capabilities: - Weekly automated ingestion of F1 session data (meetings, laps, positions, car telemetry, pit stops, stints, and more) from the OpenF1 REST API - Immutable raw JSON archive in MinIO (S3-compatible object storage) as the source of truth - Idempotent, hash-verified loading into BigQuery bronze tables with a full audit trail - Multi-layer transformation (bronze → silver → gold) using dbt with BigQuery adapter - Dimensional model exposing driver, session, and meeting dimensions alongside fact tables for laps, positions, pits, and stints - Pre-built gold-layer analytical models: lap time progression, pit stop duration by team, and qualifying teammate gap analysis

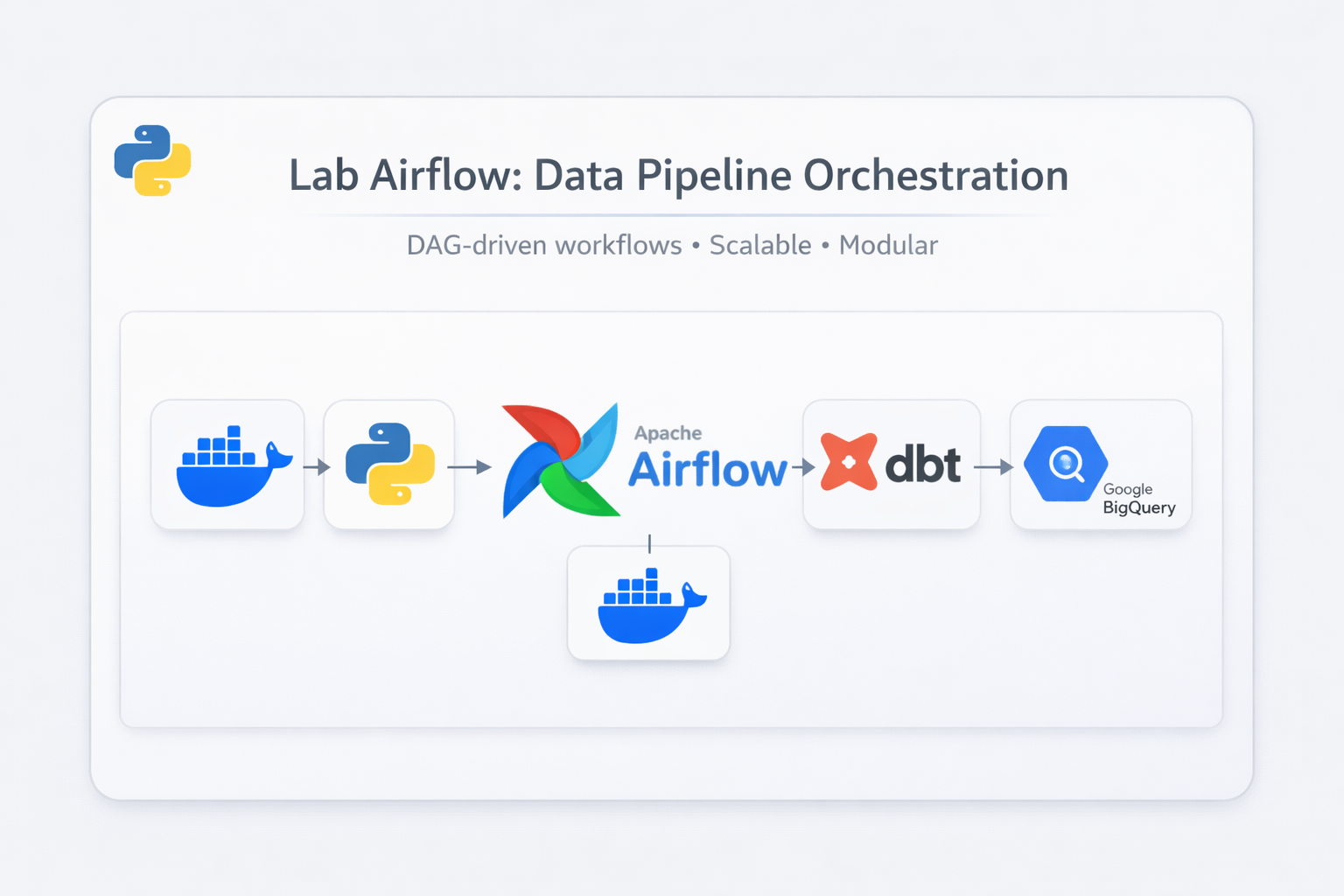

Tech stack: Apache Airflow (Astronomer Runtime) · MinIO · Google BigQuery · dbt-bigquery · Docker Compose

Architecture

The pipeline is structured as a classic medallion architecture across three logical layers, orchestrated end-to-end by Airflow.

┌────────────────────────────────────────────────────────────────────┐

│ Orchestration │

│ Apache Airflow (Astronomer) │

│ f1_dag → bigquery_f1_load_dag → dbt_f1_dag │

└──────────────────────────┬─────────────────────────────────────────┘

│

┌──────────────▼──────────────┐

│ Ingestion Layer │

│ OpenF1 REST API │

│ (meetings, sessions, │

│ laps, car data, etc.) │

└──────────────┬──────────────┘

│ Raw JSON (per entity, per meeting)

┌──────────────▼───────────────┐

│ Raw Archive (Bronze) │

│ MinIO Object Storage │

│ (immutable source of truth)│

└──────────────┬───────────────┘

│ SHA-256 content-hash verified load jobs

┌──────────────▼──────────────┐

│ BigQuery Bronze Layer │

│ tables (partitioned │

│ + clustered) │

│ bronze.load_audit │

└──────────────┬──────────────┘

│ dbt MERGE / incremental models

┌──────────────▼──────────────┐

│ BigQuery Silver Layer │

│ Cleaned, deduplicated │

│ dim_ + fct_ tables │

└──────────────┬──────────────┘

│ dbt aggregations

┌──────────────▼───────────────┐

│ BigQuery Gold Layer │

│ Analytics-ready models │

│ (lap progression, pit stop │

│ duration, qualifying gap) │

└──────────────────────────────┘

Key Decisions

ADR-001 — BigQuery bronze layer partitioning strategy

High-volume time-series tables (car_data, positions, locations, intervals) are partitioned by DATE(date) at day granularity, aligning partitions with individual race sessions. The laps table uses DATE(_loaded_at) because the event-time column date_start is nullable for aborted laps. Small reference tables (meetings, sessions, drivers, stints) use clustering only — their size makes partition overhead counterproductive. All applicable tables cluster on (meeting_key, session_key, driver_number), mirroring the query patterns used throughout the pipeline.

ADR-002 — ClickHouse replaced by BigQuery as the analytical store

The original bronze layer ran on a self-hosted ClickHouse instance (Docker container). At F1 data volumes — particularly the 3.7 Hz streams from car_data, positions, and locations — ClickHouse consumed ~5 GB of the 9 GB Docker Desktop allocation, causing repeated OOM kills and Docker daemon instability. Migrating to BigQuery offloaded all storage and compute to a managed service, leaving Docker running only Airflow and MinIO. This also replaced ClickHouse's native ReplacingMergeTree deduplication with dbt MERGE-based incremental models.

ADR-003 — MinIO retained as raw archive after BigQuery migration

Rather than designating BigQuery as the single source of truth, MinIO continues to store the original raw JSON responses from the OpenF1 API. This separation of concerns means the ingestion leg (API → MinIO) and the loading leg (MinIO → BigQuery) can fail and be retried independently. It also enables full re-derivation of any bronze table from scratch if a schema change, loader bug, or accidental table drop requires it.

ADR-004 — Reserved keyword interval retained with backtick quoting

The OpenF1 API returns a field named interval in the intervals endpoint. Rather than renaming it (e.g. interval_to_car_ahead) and diverging from the source schema, the original name is preserved and quoted with backticks in all DDL and SQL. This keeps the bronze schema directly readable against the API documentation, at the minor cost of consistent quoting in downstream dbt models.

ADR-005 — Content-hash idempotency for bronze loads

Bronze loaders use WRITE_APPEND load jobs, which would duplicate rows on DAG retries or backfills. Three strategies were evaluated: file-existence check, unconditional DELETE + re-append, and content-hash comparison. The chosen approach computes SHA-256 of the raw MinIO bytes and compares it against a bronze.load_audit table. Unchanged files are skipped entirely; files with a differing hash trigger a targeted DELETE then reload. The hash is recorded only after a successful insert, so a mid-run crash leaves no record and the next run retries cleanly. This also detects silent upstream corrections — if OpenF1 updates historical data and the ingestion job rewrites a MinIO file, the hash difference triggers a clean reload that a file-existence check would miss.